In a previous blog, Dave Goossens argued that Mythos readiness starts with total visibility, as you can’t defend what you can’t see. There is a second reality converging on the same problem:

The speed and complexity of modern IT and security operations are pushing organizations past the limits of what manual processes can handle.

Automation is becoming inevitable and agentic AI is quickly making it so that scenarios that were impossible not too long ago are all of a sudden, possible. That changes the stakes of the visibility problem entirely. When humans operate on incomplete data, they can compensate. But when autonomous systems do the same, they can’t and won’t adjust for unknowns. The question is whether the data your organization has about itself is ready for what is coming and in most cases, it is not.

Automation Is No Longer Optional

The race to deploy AI agents is accelerating across security operations, IT remediation, patch orchestration, and infrastructure monitoring. The vendors promising to automate these workflows are insisting that the the technology exists. The pressure to adopt is real.

The cybersecurity workforce gap now stands at 4.8 million unfilled positions globally, with 88% of teams reporting significant incidents directly tied to skills shortages (ISC2 2025 Workforce Study). IT headcount growth expectations are at their lowest point since 2011 (Harvey Nash / IANS via CIO.com). The teams that remain are stretched thinner every quarter, managing environments that are ever more complex.

At the same time, the window between disclosure and exploitation is closing fast. In 2023, the median time from a vulnerability being disclosed to the first observed exploit was six days. By 2024, it had collapsed to four hours (Zero Day Clock). In 2026, the majority of exploited CVEs are expected to be zero-days, weaponized before they are even publicly disclosed. The average time to fix a security flaw has moved in the opposite direction: 252 days, up 47% since 2020 (Veracode 2026). That is the gap defenders are operating in. And with 84% of CIOs now ranking cost optimization as their top priority, ahead of security for the first time (Gartner via Splunk), the resources to close it manually are not coming.

So we have a staffing wall you cannot hire through, a threat accelerating beyond manual response, and a budget that demands efficiency. Automation of IT and security workflows is not a strategic choice. It is an operational necessity.

But Every Agent Asks the Same First Question

This is what the conversation about AI-powered automation consistently skips. Every one of those agents, before it does anything useful, will ask the same question: “What do I know?”

What it knows is whatever your organization already knows about itself. And based on many conversations I have had with CIO’s and CISO’s alike, that picture has gaps.

According to Gartner, 40% of enterprise infrastructure is currently invisible to IT. Not poorly documented or imperfectly mapped, but simply unknown. That is the environment your AI agent steps into on day one.

Most organizations point to their CMDB as the answer. The track record is hard to ignore: 75% of CMDBs fail to deliver value due to data quality problems (Gartner, 2025). Not because the tools are wrong, but because maintaining complete, accurate configuration data at the speed a modern environment changes is genuinely hard.

A 2026 study by Cloudera and Harvard Business Review Analytic Services found that 46% of organizations identify data quality as one of the most critical components of their AI strategy. Gartner confirms the consequence: 30% of generative AI projects will be abandoned after proof of concept with poor data quality as the leading reason.

Organizations are not failing at AI because the models are wrong. They are failing because the data beneath the models is incomplete.

The Failure Mode No One Is Planning For

When people make decisions with incomplete information there are signals: hesitation, requests for clarification, escalation and so on. Autonomous systems do not work this way.

As you deploy AI agents to manage your patch remediation cycle, the AI builds a model of your environment from whatever data it can access. If that data reflects 60% of your assets, the agent manages 60% of your environment and produces a report that reads like 100%. The remaining 40% does not appear as an error. It simply does not appear.

Now scale that up. Most organizations will not deploy one agent. They will deploy several: one for vulnerability management, one for compliance, one for IT operations, others embedded in the tools they already use. If those agents are operating on different data sources with different update cycles and different coverage, they will not just miss things independently. They will contradict each other. One agent patches a system another agent does not know exists. One flags a risk on an asset another considers compliant. The result goes beyond blind spots: conflicting actions, executed autonomously, at machine speed, with no human in the loop to catch the contradiction.

In a recent survey of 1,200 IT and security professionals, 46% identified data quality as a key barrier to scaling agentic AI, up 9 points year over year. The problem is growing faster than the solutions.

This Is Not Just About AI

The data prerequisite is not unique to AI agents. It applies to any automation. Every workflow you automate, whether it’s powered by a large language model or a simple if/then rule, inherits the quality of the data it operates on.

An automated patch deployment that cannot see 40% of your endpoints does not need AI to fail. A configuration compliance check running against stale inventory data does not need machine learning to produce false confidence. Automation amplifies whatever you feed it.

What AI agents change is the cost of getting it wrong. An agent that can reason about a system it cannot fully see will produce confident errors at a speed and scale that manual processes never could.

What “Ready” Actually Looks Like

The organizations that will see real ROI from automation in the next 24 months are not the ones with the most sophisticated agents. They are the ones who asked the agent’s first question before the agent did. Three properties separate their data from everyone else’s.

- Complete. Every asset, every environment, every network segment. Actually discovered, not sampled or partially scanned.

- Current. Continuously validated, reflecting what is running right now. Not last week’s snapshot or the quarterly audit.

- Consistent. One authoritative record that every team and every agent works from. Not three competing inventories feeding three different automation workflows with three different versions of the truth.

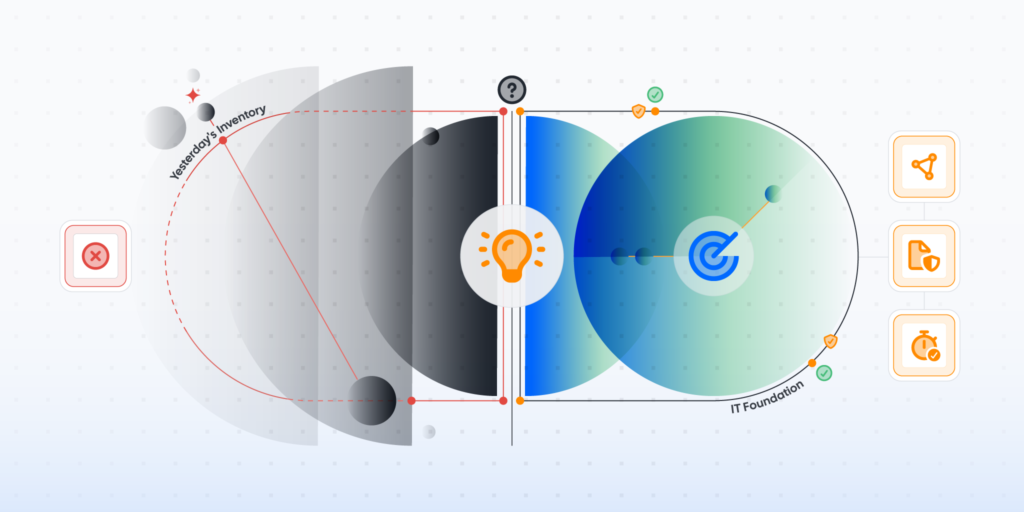

How Lansweeper Is Building Toward This

For twenty years, we have built a system of visibility: discovering every asset across IT, OT, IoT, and cloud, without requiring agents or credentials on every device. That foundation now spans 175 million devices across 30,000 environments.

Visibility alone is not enough. An asset record without business context is a row in a spreadsheet. That is why we are now building the system of context, enriching raw asset data with ownership, risk posture, business criticality, and cross-tool correlation so that organizations know what they have, what it means, and what is exposed.

Context without action is just a dashboard. The system of agency is where this comes together. With Lansweeper Lens and our recently launched MCP server with plugins for Atlassian, Claude, and soon other platforms, we are enabling AI agents and automated workflows to act on asset intelligence directly through structured, machine-readable data they can query, reason over, and act on.

The agentic era will not wait for your data to catch up. The question is not whether to automate. It is whether your organization knows itself well enough for automation to help rather than harm.